Autonomous vehicles & IoT

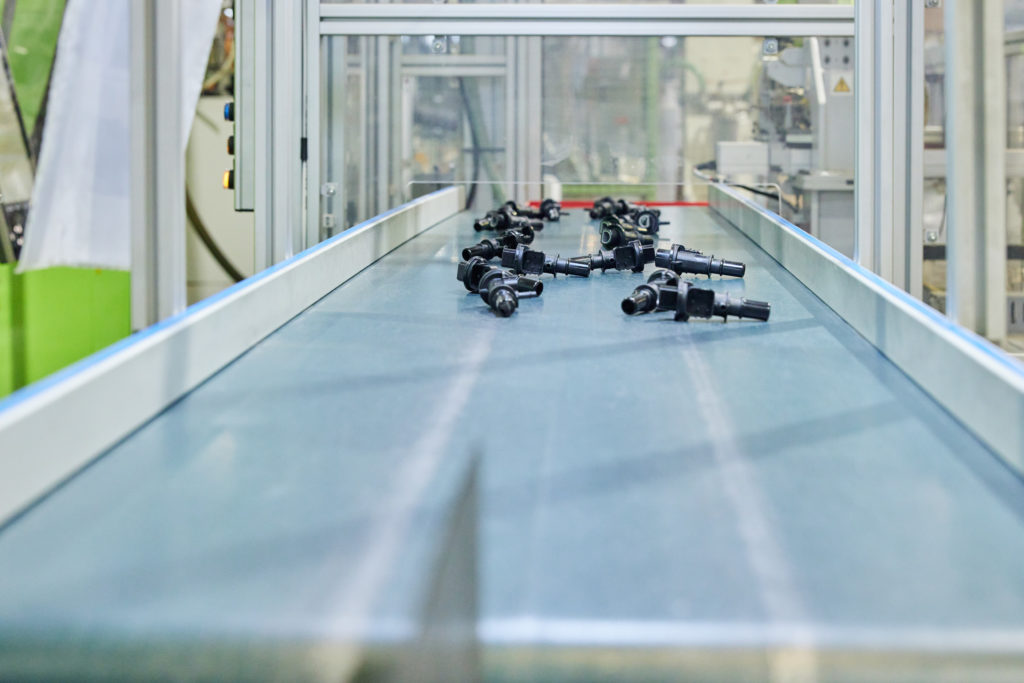

Every electrical appliance we use, even our cars, embeds electromechanical components. These are, for example, the countless switches and sensors built in the devices we use daily. Due to the crucial functions they perform, these components need to go through rigorous production processes, which involve intense testing and close monitoring.

For this reason, a typical production unit for such a component often requires the integration of different machinery produced by diverse vendors, each based on their own proprietary technology. This makes the gathering of data analytics from production units a manual and cost-intensive endeavour. What if, thought researchers at the University of Luxembourg’s Interdisciplinary Centre for Security, Reliability and Trust, we could build a layer able to collect all data from different devices in a standardised manner?

This was the goal of the first project that the Security Design and Validation (SerVal) research group at SnT launched in partnership with Cebi, the Luxembourg-based global producer of electromechanical components, back in April 2018. The project, entitled Industry4.0@Cebi, focused on interoperability, with the goal of implementing open communication protocols and standards between proprietary and vendor lock-in devices.

With the support of the FNR, in June 2022, SnT and Cebi started working on a follow-up project to Industry4.0@Cebi entitled Uptime4.0 (Robust Predictive Maintenance for Industry 4.0). The project aims to design a decision-making system able to act on the new data being gathered to further optimise maintenance and production processes. From a scientific viewpoint, the project investigates how artificial intelligence (AI) techniques can be used to both predict the remaining useful life (RUL) of the machinery in each production unit in order to optimise maintenance practices (moving from reactive and preventive to predictive maintenance policies), as well as predict product quality and waste production overtime.

“The goal is to plan maintenance interventions as close as possible to the failure time, to get the most use out of a single part and avoid generating unnecessary waste."

Sylvain Kubler, SnT Tweet

To this extent, SnT researchers are relying on a special type of machine learning called Reinforcement Learning (RL). The technique involves training an RL agent to make decisions in a given context. For example, based on the data gathered, the RL agent could deem a certain failure more likely to occur in the near future, and thus schedule a maintenance action to inspect, maintain, or replace a component. “The goal is to plan maintenance interventions as close as possible to the failure time, to get the most use out of a single part and avoid generating unnecessary waste, while still preventing the unplanned downtime we would run into if we were to act too late,” says Dr. Sylvain Kubler, research scientist at SnT, who works on the project alongside Prof. Yves Le Traon, the principal investigator, Marcelo Luis Ruiz Rodriguez and Dorian Joubaud, doctoral researchers.

Once the maintenance interventions are scheduled, they can each be dispatched to the right technician, be batched with other interventions, or planned in a certain order to minimise downtime. In fact, based on the calculations made by the research team, using the AI to optimise maintenance brings a massive increase in uptime and a net decrease in downtime (see figure 1).

“Developing trustworthy AI is a challenge that both the scientific and the industrial world need to tackle with the greatest care to achieve safe, fair, and sustainable growth.”

Sylvain Kubler, SnT Tweet

In addition to its benefits for productivity and minimising waste, Uptime4.0 is a chance for SnT researchers to conduct fundamental research on the integration of robust AI in production processes. In fact, a key factor that the research team is considering in their work is the trustworthiness of AI. In compliance with the seven requirements set by the European Commission for trustworthy AI, the research team is looking at aspects such as the robustness of the AI to noise data, for example that of a faulty sensor that could pollute the data set and thus lead the AI to wrong findings, recommendations or decisions. Another important element is that of transparency of AI (aka eXplainable AI – XAI), so that the AI’s motives and thought processes it followed to reach its decision are clearly explained to human agents. Other aspects covered by this set of requirements include, among others, prioritising human agency, accountability, non-discrimination, and fairness, all with the goal of developing industrial AI that can be integrated in a secure, organic, and feasible manner.

“Developing trustworthy AI is a challenge that both the scientific and the industrial world need to tackle with the greatest care to achieve safe, fair, and sustainable growth,” said Dr. Kubler. “We are very attentive to its implications for both fundamental and applied research,” he concluded.